This is the multi-page printable view of this section.

Click here to print.

Return to the regular view of this page.

Migrating from dockershim

This section presents information you need to know when migrating from

dockershim to other container runtimes.

Since the announcement of dockershim deprecation

in Kubernetes 1.20, there were questions on how this will affect various workloads and Kubernetes

installations. Our Dockershim Removal FAQ is there to help you

to understand the problem better.

Dockershim will be removed from Kubernetes following the release of v1.24.

If you use Docker via dockershim as your container runtime, and wish to upgrade to v1.24,

it is recommended that you either migrate to another runtime or find an alternative means to obtain Docker Engine support.

If you're not sure whether you are using Docker,

find out what container runtime is used on a node.

Your cluster might have more than one kind of node, although this is not a common

configuration.

These tasks will help you to migrate:

What's next

- Check out container runtimes

to understand your options for a container runtime.

- There is a

GitHub issue

to track discussion about the deprecation and removal of dockershim.

- If you found a defect or other technical concern relating to migrating away from dockershim,

you can report an issue

to the Kubernetes project.

1 - Changing the Container Runtime on a Node from Docker Engine to containerd

This task outlines the steps needed to update your container runtime to containerd from Docker. It

is applicable for cluster operators running Kubernetes 1.23 or earlier. Also this covers an

example scenario for migrating from dockershim to containerd and alternative container runtimes

can be picked from this page.

Before you begin

Note:

This section links to third party projects that provide functionality required by Kubernetes. The Kubernetes project authors aren't responsible for these projects, which are listed alphabetically. To add a project to this list, read the

content guide before submitting a change.

More information.

Install containerd. For more information see

containerd's installation documentation

and for specific prerequisite follow

the containerd guide.

Drain the node

kubectl drain <node-to-drain> --ignore-daemonsets

Replace <node-to-drain> with the name of your node you are draining.

Stop the Docker daemon

systemctl stop kubelet

systemctl disable docker.service --now

Install Containerd

Follow the guide

for detailed steps to install containerd.

-

Install the containerd.io package from the official Docker repositories.

Instructions for setting up the Docker repository for your respective Linux distribution and

installing the containerd.io package can be found at

Install Docker Engine.

-

Configure containerd:

sudo mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

-

Restart containerd:

sudo systemctl restart containerd

Start a Powershell session, set $Version to the desired version (ex: $Version="1.4.3"), and

then run the following commands:

-

Download containerd:

curl.exe -L https://github.com/containerd/containerd/releases/download/v$Version/containerd-$Version-windows-amd64.tar.gz -o containerd-windows-amd64.tar.gz

tar.exe xvf .\containerd-windows-amd64.tar.gz

-

Extract and configure:

Copy-Item -Path ".\bin\" -Destination "$Env:ProgramFiles\containerd" -Recurse -Force

cd $Env:ProgramFiles\containerd\

.\containerd.exe config default | Out-File config.toml -Encoding ascii

# Review the configuration. Depending on setup you may want to adjust:

# - the sandbox_image (Kubernetes pause image)

# - cni bin_dir and conf_dir locations

Get-Content config.toml

# (Optional - but highly recommended) Exclude containerd from Windows Defender Scans

Add-MpPreference -ExclusionProcess "$Env:ProgramFiles\containerd\containerd.exe"

-

Start containerd:

.\containerd.exe --register-service

Start-Service containerd

Edit the file /var/lib/kubelet/kubeadm-flags.env and add the containerd runtime to the flags.

--container-runtime=remote and

--container-runtime-endpoint=unix:///run/containerd/containerd.sock".

Users using kubeadm should be aware that the kubeadm tool stores the CRI socket for each host as

an annotation in the Node object for that host. To change it you can execute the following command

on a machine that has the kubeadm /etc/kubernetes/admin.conf file.

kubectl edit no <node-name>

This will start a text editor where you can edit the Node object.

To choose a text editor you can set the KUBE_EDITOR environment variable.

-

Change the value of kubeadm.alpha.kubernetes.io/cri-socket from /var/run/dockershim.sock

to the CRI socket path of your choice (for example unix:///run/containerd/containerd.sock).

Note that new CRI socket paths must be prefixed with unix:// ideally.

-

Save the changes in the text editor, which will update the Node object.

Restart the kubelet

Verify that the node is healthy

Run kubectl get nodes -o wide and containerd appears as the runtime for the node we just changed.

Remove Docker Engine

Note:

This section links to third party projects that provide functionality required by Kubernetes. The Kubernetes project authors aren't responsible for these projects, which are listed alphabetically. To add a project to this list, read the

content guide before submitting a change.

More information.

Finally if everything goes well, remove Docker.

sudo yum remove docker-ce docker-ce-cli

sudo apt-get purge docker-ce docker-ce-cli

sudo dnf remove docker-ce docker-ce-cli

sudo apt-get purge docker-ce docker-ce-cli

2 - Migrate Docker Engine nodes from dockershim to cri-dockerd

Note:

This section links to third party projects that provide functionality required by Kubernetes. The Kubernetes project authors aren't responsible for these projects, which are listed alphabetically. To add a project to this list, read the

content guide before submitting a change.

More information.

This page shows you how to migrate your Docker Engine nodes to use cri-dockerd

instead of dockershim. Follow these steps if your clusters run Kubernetes 1.23

or earlier and you want to continue using Docker Engine after

you upgrade to Kubernetes 1.24 and later, or if you just want to move off the

dockershim component.

What is cri-dockerd?

In Kubernetes 1.23 and earlier, Docker Engine used a component called the

dockershim to interact with Kubernetes system components such as the kubelet.

The dockershim component is deprecated and will be removed in Kubernetes 1.24. A

third-party replacement, cri-dockerd, is available. The cri-dockerd adapter

lets you use Docker Engine through the Container Runtime Interface.

If you want to migrate to cri-dockerd so that you can continue using Docker

Engine as your container runtime, you should do the following for each affected

node:

- Install

cri-dockerd.

- Cordon and drain the node.

- Configure the kubelet to use

cri-dockerd.

- Restart the kubelet.

- Verify that the node is healthy.

Test the migration on non-critical nodes first.

You should perform the following steps for each node that you want to migrate

to cri-dockerd.

Before you begin

Cordon and drain the node

-

Cordon the node to stop new Pods scheduling on it:

kubectl cordon <NODE_NAME>

Replace <NODE_NAME> with the name of the node.

-

Drain the node to safely evict running Pods:

kubectl drain <NODE_NAME> \

--ignore-daemonsets

The following steps apply to clusters set up using the kubeadm tool. If you use

a different tool, you should modify the kubelet using the configuration

instructions for that tool.

- Open

/var/lib/kubelet/kubeadm-flags.env on each affected node.

- Modify the

--container-runtime-endpoint flag to

unix:///var/run/cri-dockerd.sock.

The kubeadm tool stores the node's socket as an annotation on the Node object

in the control plane. To modify this socket for each affected node:

-

Edit the YAML representation of the Node object:

KUBECONFIG=/path/to/admin.conf kubectl edit no <NODE_NAME>

Replace the following:

/path/to/admin.conf: the path to the kubectl configuration file,

admin.conf.<NODE_NAME>: the name of the node you want to modify.

-

Change kubeadm.alpha.kubernetes.io/cri-socket from

/var/run/dockershim.sock to unix:///var/run/cri-dockerd.sock.

-

Save the changes. The Node object is updated on save.

Restart the kubelet

systemctl restart kubelet

Verify that the node is healthy

To check whether the node uses the cri-dockerd endpoint, follow the

instructions in Find out which runtime you use.

The --container-runtime-endpoint flag for the kubelet should be unix:///var/run/cri-dockerd.sock.

Uncordon the node

Uncordon the node to let Pods schedule on it:

kubectl uncordon <NODE_NAME>

What's next

3 - Find Out What Container Runtime is Used on a Node

This page outlines steps to find out what container runtime

the nodes in your cluster use.

Depending on the way you run your cluster, the container runtime for the nodes may

have been pre-configured or you need to configure it. If you're using a managed

Kubernetes service, there might be vendor-specific ways to check what container runtime is

configured for the nodes. The method described on this page should work whenever

the execution of kubectl is allowed.

Before you begin

Install and configure kubectl. See Install Tools section for details.

Find out the container runtime used on a Node

Use kubectl to fetch and show node information:

kubectl get nodes -o wide

The output is similar to the following. The column CONTAINER-RUNTIME outputs

the runtime and its version.

For Docker Engine, the output is similar to this:

NAME STATUS VERSION CONTAINER-RUNTIME

node-1 Ready v1.16.15 docker://19.3.1

node-2 Ready v1.16.15 docker://19.3.1

node-3 Ready v1.16.15 docker://19.3.1

If your runtime shows as Docker Engine, you still might not be affected by the

removal of dockershim in Kubernetes 1.24. Check the runtime

endpoint to see if you use dockershim. If you don't use

dockershim, you aren't affected.

For containerd, the output is similar to this:

NAME STATUS VERSION CONTAINER-RUNTIME

node-1 Ready v1.19.6 containerd://1.4.1

node-2 Ready v1.19.6 containerd://1.4.1

node-3 Ready v1.19.6 containerd://1.4.1

Find out more information about container runtimes

on Container Runtimes

page.

Find out what container runtime endpoint you use

The container runtime talks to the kubelet over a Unix socket using the CRI

protocol, which is based on the gRPC

framework. The kubelet acts as a client, and the runtime acts as the server.

In some cases, you might find it useful to know which socket your nodes use. For

example, with the removal of dockershim in Kubernetes 1.24 and later, you might

want to know whether you use Docker Engine with dockershim.

Note: If you currently use Docker Engine in your nodes with cri-dockerd, you aren't

affected by the dockershim removal.

You can check which socket you use by checking the kubelet configuration on your

nodes.

-

Read the starting commands for the kubelet process:

tr \\0 ' ' < /proc/"$(pgrep kubelet)"/cmdline

If you don't have tr or pgrep, check the command line for the kubelet

process manually.

-

In the output, look for the --container-runtime flag and the

--container-runtime-endpoint flag.

- If your nodes use Kubernetes v1.23 and earlier and these flags aren't

present or if the

--container-runtime flag is not remote,

you use the dockershim socket with Docker Engine.

- If the

--container-runtime-endpoint flag is present, check the socket

name to find out which runtime you use. For example,

unix:///run/containerd/containerd.sock is the containerd endpoint.

If you use Docker Engine with the dockershim, migrate to a different runtime,

or, if you want to continue using Docker Engine in v1.24 and later, migrate to a

CRI-compatible adapter like cri-dockerd.

4 - Check whether Dockershim deprecation affects you

The dockershim component of Kubernetes allows to use Docker as a Kubernetes's

container runtime.

Kubernetes' built-in dockershim component was

deprecated

in release v1.20.

This page explains how your cluster could be using Docker as a container runtime,

provides details on the role that dockershim plays when in use, and shows steps

you can take to check whether any workloads could be affected by dockershim deprecation.

Finding if your app has a dependencies on Docker

If you are using Docker for building your application containers, you can still

run these containers on any container runtime. This use of Docker does not count

as a dependency on Docker as a container runtime.

When alternative container runtime is used, executing Docker commands may either

not work or yield unexpected output. This is how you can find whether you have a

dependency on Docker:

- Make sure no privileged Pods execute Docker commands (like

docker ps),

restart the Docker service (commands such as systemctl restart docker.service),

or modify Docker-specific files such as /etc/docker/daemon.json.

- Check for any private registries or image mirror settings in the Docker

configuration file (like

/etc/docker/daemon.json). Those typically need to

be reconfigured for another container runtime.

- Check that scripts and apps running on nodes outside of your Kubernetes

infrastructure do not execute Docker commands. It might be:

- SSH to nodes to troubleshoot;

- Node startup scripts;

- Monitoring and security agents installed on nodes directly.

- Third-party tools that perform above mentioned privileged operations. See

Migrating telemetry and security agents from dockershim

for more information.

- Make sure there is no indirect dependencies on dockershim behavior.

This is an edge case and unlikely to affect your application. Some tooling may be configured

to react to Docker-specific behaviors, for example, raise alert on specific metrics or search for

a specific log message as part of troubleshooting instructions.

If you have such tooling configured, test the behavior on test

cluster before migration.

Dependency on Docker explained

A container runtime is software that can

execute the containers that make up a Kubernetes pod. Kubernetes is responsible for orchestration

and scheduling of Pods; on each node, the kubelet

uses the container runtime interface as an abstraction so that you can use any compatible

container runtime.

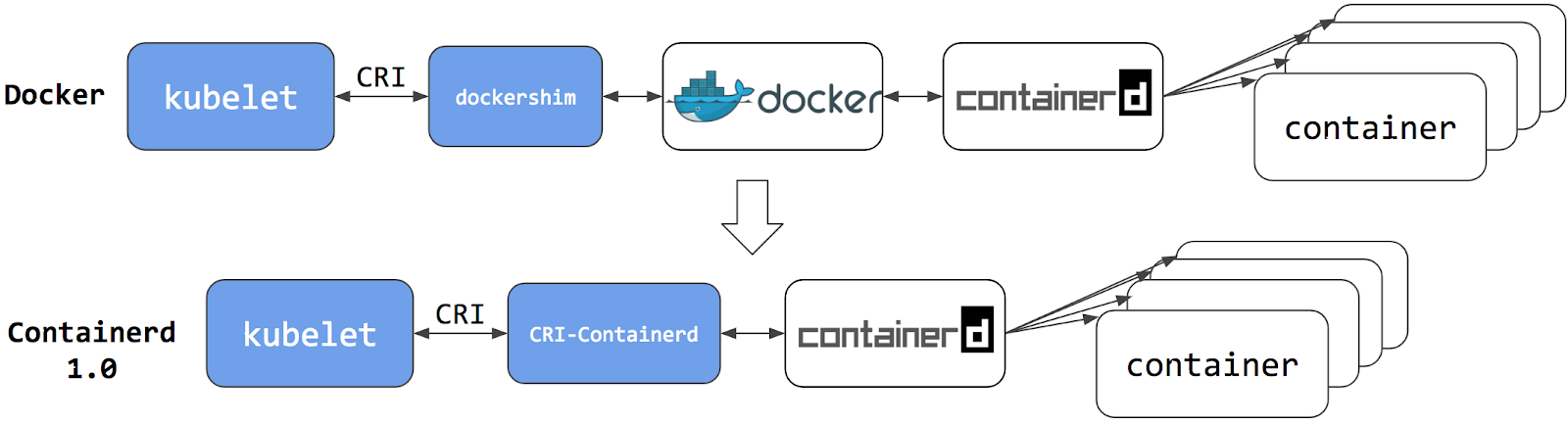

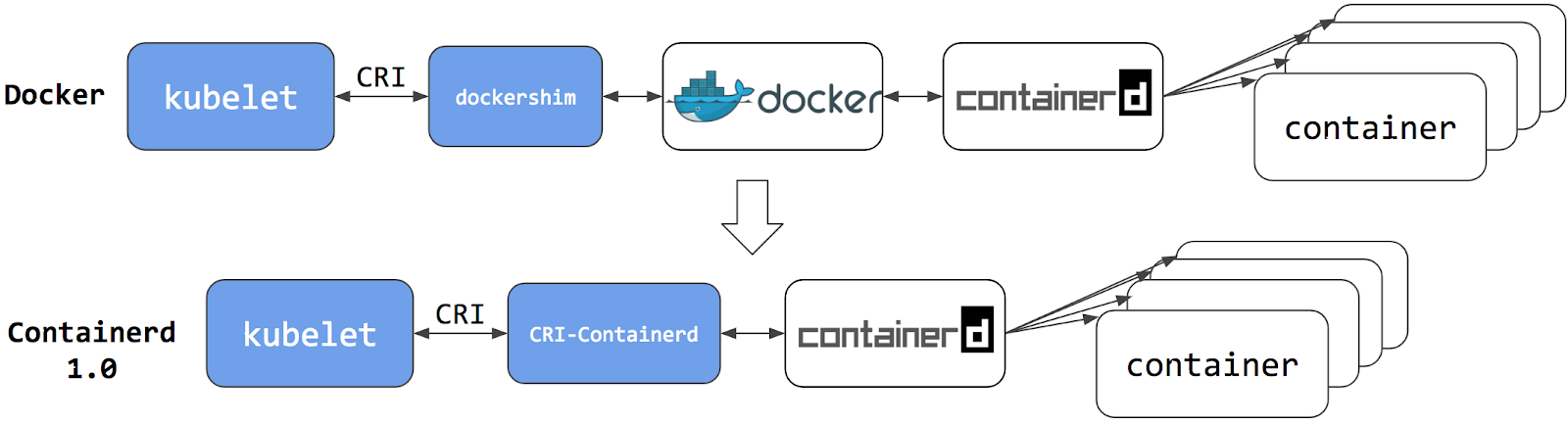

In its earliest releases, Kubernetes offered compatibility with one container runtime: Docker.

Later in the Kubernetes project's history, cluster operators wanted to adopt additional container runtimes.

The CRI was designed to allow this kind of flexibility - and the kubelet began supporting CRI. However,

because Docker existed before the CRI specification was invented, the Kubernetes project created an

adapter component, dockershim. The dockershim adapter allows the kubelet to interact with Docker as

if Docker were a CRI compatible runtime.

You can read about it in Kubernetes Containerd integration goes GA blog post.

Switching to Containerd as a container runtime eliminates the middleman. All the

same containers can be run by container runtimes like Containerd as before. But

now, since containers schedule directly with the container runtime, they are not visible to Docker.

So any Docker tooling or fancy UI you might have used

before to check on these containers is no longer available.

You cannot get container information using docker ps or docker inspect

commands. As you cannot list containers, you cannot get logs, stop containers,

or execute something inside container using docker exec.

Note: If you're running workloads via Kubernetes, the best way to stop a container is through

the Kubernetes API rather than directly through the container runtime (this advice applies

for all container runtimes, not only Docker).

You can still pull images or build them using docker build command. But images

built or pulled by Docker would not be visible to container runtime and

Kubernetes. They needed to be pushed to some registry to allow them to be used

by Kubernetes.

What's next

5 - Migrating telemetry and security agents from dockershim

Kubernetes' support for direct integration with Docker Engine is deprecated, and will be removed. Most apps do not have a direct dependency on runtime hosting containers. However, there are still a lot of telemetry and monitoring agents that has a dependency on docker to collect containers metadata, logs and metrics. This document aggregates information on how to detect these dependencies and links on how to migrate these agents to use generic tools or alternative runtimes.

Telemetry and security agents

Within a Kubernetes cluster there are a few different ways to run telemetry or security agents.

Some agents have a direct dependency on Docker Engine when they run as DaemonSets or

directly on nodes.

Why do some telemetry agents communicate with Docker Engine?

Historically, Kubernetes was written to work specifically with Docker Engine.

Kubernetes took care of networking and scheduling, relying on Docker Engine for launching

and running containers (within Pods) on a node. Some information that is relevant to telemetry,

such as a pod name, is only available from Kubernetes components. Other data, such as container

metrics, is not the responsibility of the container runtime. Early telemetry agents needed to query the

container runtime and Kubernetes to report an accurate picture. Over time, Kubernetes gained

the ability to support multiple runtimes, and now supports any runtime that is compatible with

the container runtime interface.

Some telemetry agents rely specifically on Docker Engine tooling. For example, an agent

might run a command such as

docker ps

or docker top to list

containers and processes or docker logs

to receive streamed logs. If nodes in your existing cluster use

Docker Engine, and you switch to a different container runtime,

these commands will not work any longer.

Identify DaemonSets that depend on Docker Engine

If a pod wants to make calls to the dockerd running on the node, the pod must either:

- mount the filesystem containing the Docker daemon's privileged socket, as a

volume; or

- mount the specific path of the Docker daemon's privileged socket directly, also as a volume.

For example: on COS images, Docker exposes its Unix domain socket at

/var/run/docker.sock This means that the pod spec will include a

hostPath volume mount of /var/run/docker.sock.

Here's a sample shell script to find Pods that have a mount directly mapping the

Docker socket. This script outputs the namespace and name of the pod. You can

remove the grep '/var/run/docker.sock' to review other mounts.

kubectl get pods --all-namespaces \

-o=jsonpath='{range .items[*]}{"\n"}{.metadata.namespace}{":\t"}{.metadata.name}{":\t"}{range .spec.volumes[*]}{.hostPath.path}{", "}{end}{end}' \

| sort \

| grep '/var/run/docker.sock'

Note: There are alternative ways for a pod to access Docker on the host. For instance, the parent

directory

/var/run may be mounted instead of the full path (like in

this

example).

The script above only detects the most common uses.

Detecting Docker dependency from node agents

In case your cluster nodes are customized and install additional security and

telemetry agents on the node, make sure to check with the vendor of the agent whether it has dependency on Docker.

Telemetry and security agent vendors

We keep the work in progress version of migration instructions for various telemetry and security agent vendors

in Google doc.

Please contact the vendor to get up to date instructions for migrating from dockershim.